In today's software industry, microservices architecture has revolutionized by offering significant benefits over the traditional monolithic approach. This architecture enables developers to easily integrate new features or updates without impacting other services, making it simpler to scale their application services.

To ensure smooth interactions between services, as well as responsiveness, reliability, stability, and scalability of the application, the microservices performance testing is pivotal, much like other types of testing for this architecture.

In addition to the traditional approach of performance testing, artificial intelligence plays a crucial role in streamlining testing processes, reducing both testing time and complexity that can be achieved by simulating real-world usage scenarios, where predictive analysis mitigates performance bottlenecks, and auto-scaling configurations adapt dynamically to changing workloads.

Let's first examine the projected growth of microservices architecture, expected to increase from USD 5.49 billion to USD 21.61 billion at an 18.66% CAGR from 2021 to 2030:

Microservices Architecture Market Size Trend

CAGR of 18.66%

How AI Is Boosting Performance Testing of Microservices

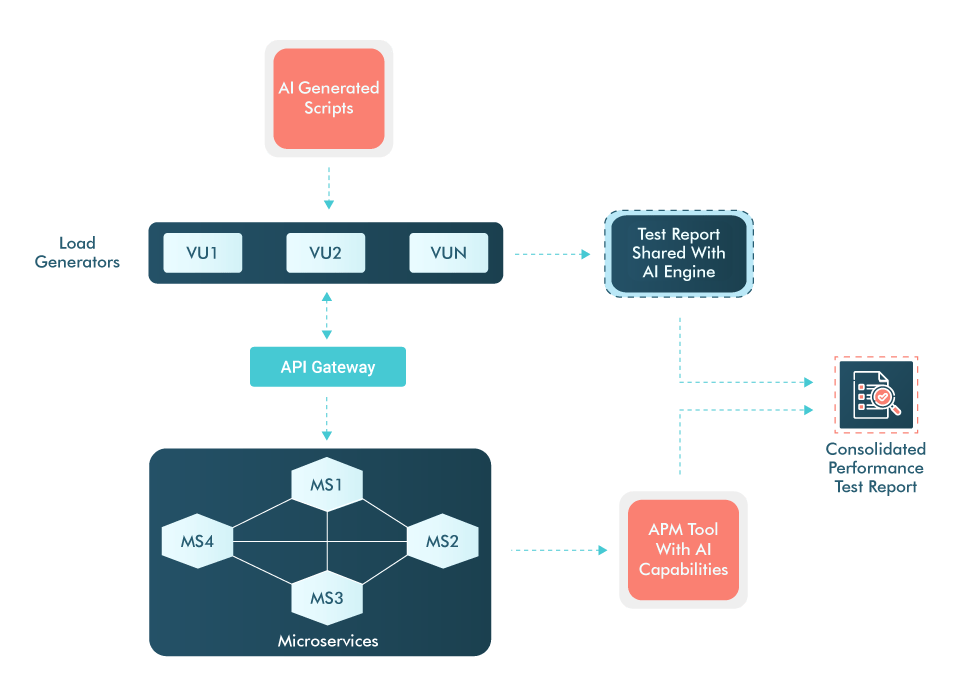

Due to distributed nature of microservices architecture, the way data flows through different microservices is complex due to the service paths being dynamic and depending upon user choice of using a service or functionality. So, this needs a different test strategy and inclusion of AI performance testing tools help in making testing more efficient.

Microservices Performance Testing With AI

Below are some important areas where AI can help us in:

- Predicting the traffic patterns and auto-scale microservices configurations accordingly

- Optimizing the resource allocation

- Anomaly detection for microservices that results in errors/crashes or slowness

- Reducing mean time to resolution(MTTR) by quickly analyzing various data sources like logs, metrics and traces

- Recommendations for fixing the performance bottlenecks using AI powered APM tools

Key Scenarios for Performance Testing

Below are the major scenarios that need to be covered while preparing the test plan for performance testing of microservices application:

- Mock dependent external services to have complete workflow of the realistic scenario

- Outage to test microservices failover:

- Induce data store outage

- Empty data stores

- Dependent service is down

- Stop or restart microservices

- With and without cache, especially to expose inefficient caching of database microservices

- Run continuous tests to ensure the micro-services perform well and there are no resource leaks

- High number of database connections to reveal database responsiveness

- Load all microservices simultaneously to check for latency on overall network

Microservices Performance Bottlenecks

There are several factors that can make a service to perform slow or fast depending upon supporting resources and total traffic on the network. A few common problems are mentioned below:

- Resource contention occurs when multiple microservices are competing for the same resource

- Failure at service level can trigger failure of dependent services, resulting in a system-wide outage

- Increased service-to-service interactions causing higher network latency

- Resource exhaustion (CPU) can lead to service crashes

- Inefficient configuration of thread pool limit or count resulting in long request queue and thus, high response time

Tools and KPIs for Microservices Load Testing

With the adoption of AI capabilities by existing tools like NeoLoad, Functionize, New Relic, and AppDynamics, you can get better results and more insights for your application. Below are some useful KPIs to measure during load testing:

- Latency of user request service traversed over entire network

- Responsiveness of individual and dependent services

- Time microservices take to recover from cascade failures

- Hit rate and efficiency of caching mechanism

- Services ability to handle increasing user loads without performance degradation

- Analyze response times and throughput from different geographic locations

Best Practices To Test Microservices-based Applications

In order to conduct the Performance testing properly for microservices-based applications, here are some best practices that need to be followed:

High risk or high usage micro-services should be load tested first

Use service virtualization for mocking the dependent functionality still under development

Components like 'Identity Provider', 'Service Discovery' and 'CDN' should be taken into consideration

Thorough knowledge of dependencies between the microservices and plan the testing accordingly

Test performance at UI level as well rather than just focusing on back end architecture

Conclusion

Core objective of microservices performance testing is to expose the components that are not optimized for scalability and prevent the crashes due to heavy load in production. So, performance testing is vital for microservices-based applications and need to be conducted at efficiently to ensure scalability, reliability, and responsiveness.

QASource team has good experience in performance testing for different domains. With powers of AI, they can assist in your performance testing needs to ensure the best user experience, test data preparation with generative AI and real-time monitoring into play using right set of tools. To learn more, get in touch now.

Have Suggestions?

We would love to hear your feedback, questions, comments, and suggestions. This will help us to make us better and more useful next time.

Share your thoughts and ideas at knowledgecenter@qasource.com