AI-based systems are non-determinism and provide probabilistic outputs as compared to fixed outputs in traditional software systems. Check how QASource uses the right AI QA strategy to check variance in results.

Rapid advancements in new-age cognitive technologies and artificial intelligence have dramatically increased the rate of adoption of AI-powered applications and systems across industries. This has also led to demand for quality engineering for artificial intelligence and machine learning-powered applications.

Why QA Is Required for AI Applications?

Some fundamental characteristics of AI-based systems like non-determinism, probabilistic outputs, and lack of transparency makes it difficult to assess and ensure quality of AI systems.

Due to these basic facts, as compared to the fixed outputs in traditional software systems, quality assurance for AI applications can’t be effectively achieved through traditional QA methods.

Market Revenue Trends in AI Software World

Difference Between Traditional Software Systems and AI Systems

Unlike traditional software systems, which are rule-based and are a collection of logical units implementing the “if X, then Y” model, AI systems are probabilistic i.e. they are non-deterministic. AI systems can exhibit different behaviors for the same input.

Therefore, the testing of AI systems is fundamentally different from traditional QA which is focused on output verification while AI QA needs to be focused on accuracy-based testing methodologies.

-

Traditional Software Systems

Deterministic:

- Definite Input and Output.

- Functions are created using if-else and loops for all possible inputs and generate expected output as per design.

- Development: Traditional software systems are collection functions and modules designed as per requirements. Every input should generate a fixed output that satisfies the expected result or behavior.

- Accuracy: A well-developed and tested system will always work for the set of inputs as per the requirements and always generate the desired result.

- Defects: Traditional software systems can easily be tested for the defined set of inputs as per the requirements and defects can be fixed by changing the functional logic and code.

-

AI systems

Non-deterministic:

- No definite input and output.

- Instead of fixed rules or logic to process input data and generate a fixed output, AI systems rather process, analyze data and predict most of the likely outcomes.

- It's more about predicting the output rather than calculating the output.

- Development: AI systems are not rule-based, instead, AI systems learn from examples. Volumes of training examples are fed to AI systems to learn from and build a model that will make predictions on the new unseen data.

- Accuracy: As AI systems are not designed for fixed inputs and outputs, the accuracy of AI systems will depend on the quality of training data, models used, feature engineering, and also on the input data. If the input is very different from the training examples, it will affect its accuracy.

- Defects/Errors: Given the fact that AI systems are non-deterministic, and defects can't be fixed just by changing the code. Finding defects in an AI system depends on the imagination and creativity of the tester. Metamorphic testing is one solution to test AI systems.

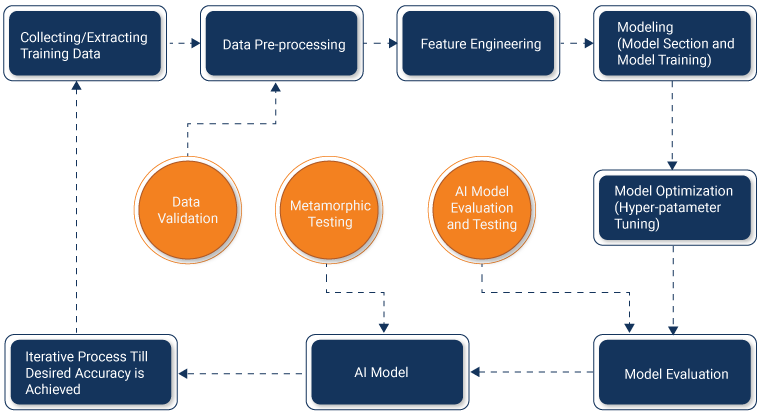

AI Workflow

In order to create the right testing strategy for AI systems, QA teams must understand the workflow of an AI system.

- AI models are developed and improved in iterative phases.

- The data science team collects training data and performs pre-processing, feature engineering, modeling (model section and model training), model optimization (hyper-parameter tuning) followed by model evaluation.

- If the model’s evaluation results on test datasets are not satisfactory, then the data science team goes back to collecting more training data, enhancing feature engineering, and implementing new models in order to increase the accuracy of models.

- AI systems do not achieve desired results and accuracy in one go but it is rather an iterative process. Due to the retraining of production models on the new datasets, evaluation is required from time to time.

Role of QASource in Delivering AI QA Expertise

At QASource, we have extensive experience in delivering effective and efficient AI QA for machine/deep learning applications, NLP, computer vision, speech recognition, and robotics. Our dedicated team of QA experts is well versed in AI workflows, model evaluation, and testing techniques and has experience in delivering AI QA for different domains like computer vision, classification, speech analytics, and object recognition.

Key Takeaways

- The output of AI systems is non-deterministic and probabilistic in nature as compared to the fixed outputs in traditional software systems, therefore it is not possible to effectively test AI systems using traditional QA methods.

- The right AI QA strategy requires methods that can evaluate the accuracy and check variance.

- Unlike traditional software systems, AI systems’ outputs change over time due to re-trainings of AI models, thus requiring constant evaluations.

- At QASource, we have extensive experience in delivering effective and efficient AI-based software testing for machine/deep learning, computer vision, and NLP applications.

To know more about how using AI for QA testing can enhance your software reliability and quality, contact QASource now.

Have Suggestions?

We would love to hear your feedback, questions, comments and suggestions. This will help us to make us better and more useful next time.

Share your thoughts and ideas at knowledgecenter@qasource.com